Hello again. In the last post, I explored some of the more common biases that affect our perception of what we experience in our daily clinical practice, and how these limit the conclusions and generalisations we can make from our clinical experience. If you haven’t already, I recommend reading it here before starting this one. Considering this, I also ended by saying that all forms of information created by humans will have some degree of our biases reflected onto them, including research studies.

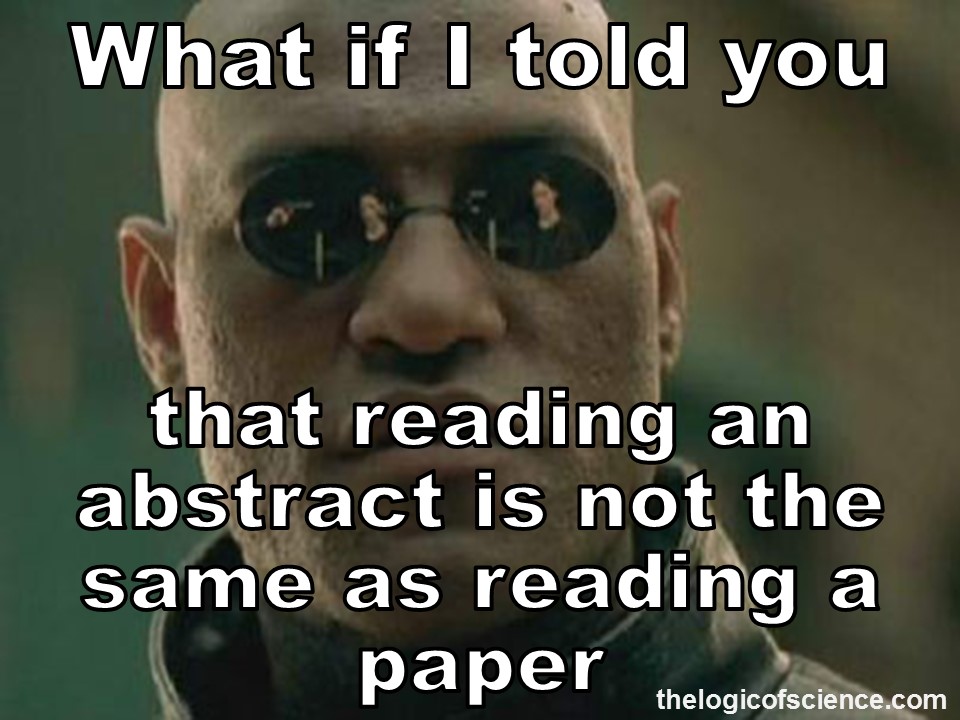

Even though I’m a convict advocate for science, I will still be analysing it critically, as it is important to be aware of the flaws that can be present in research studies because first, all studies are at some risk of bias (Kamper, 2018(a)); and second, this scientific evidence, as a key component of evidence-based practice, is still the best way for us to gain knowledge about different elements of healthcare practice, be it diagnostic tests, prevalence of conditions or treatment effectiveness. In addition to this, as I previously mentioned in this text, the first epistemological principle of evidenced-based practice states that not all evidence is created equal, and we need to be able to differentiate between which studies are better and which ones are worse.

How do we do this? Exactly by trying to identify these different types of bias that may be present in scientific evidence. When a study as got a lot of bias in it, it is more likely to lead to an inaccurate estimation of what they are trying to measure, limiting the conclusions and knowledge we can gain from that study (Kamper, 2018(a)).

This is not an easy thing. There are a lot of studies on the same topic, some of them with completely opposite conclusions. It might look that when applied by some researchers, one treatment works amazingly, but when the same treatment is applied next week by different researchers, it’s not beneficial anymore. This is a nightmare because clinical practice involves collecting information from various sources and applying it to reach a diagnosis or decide on a treatment (Kamper, 2018(a)). But, knowing about biases in research and how they affect results allows us to better assess the evidence we read, because by identifying which biases are present, or not, we can then understand which information we should give more or less weight to. Now that I’ve explained why being aware of them is useful, lets actually explore some of these biases.

Attrition Bias

It is quite common that in a study, particularly in those that run for a longer period of time, for there to be participants who stop showing up or answering the questionnaires they are sent. The problem with this is that is not possible to know what that person’s response to the intervention has been so far (Kamper, 2018(a)).

This could make the data gathered at the end of the study less accurate depending on factors such as the number of participants who completed the study, the number of participants who have left each group, how comparable are the participants who left and those who completed the study (Kamper, 2018(a)).

This bias can be tackled by trying to ensure that more than 85% of the initial participants are followed up on, as well as performing something called an ‘intention-to-treat analysis’ (Kamper, 2018(a)). This means analysing all the available data of people that were initially divided into of the groups, independently of if they complete the study or not (Bowling, 2014).

Detection Bias

When conducting a study, it is often the case that researchers want the study to show that their intervention as the effect they theorized and believe it would have (Kamper, 2018(a)). This preference of researchers may lead to them somewhat change how they measure or record outcomes, consciously or unconsciously.

For participants, this will mainly affect the outcomes they self-report on, leading for example to report doing worse than they actually did if they didn’t get the intervention they thought was better (Kamper, 2018(a)).

Both of these factors will then create bias in the results obtained in that study. In order to reduce this, what is called ‘blinging’ is applied. For participants, this means making the intervention applied to participants in the control group appears as beneficial as the one in the experimental group and not letting participants know which they are receiving (Kamper, 2018(a)).

For researchers, blinding can be achieved through the person collecting and/or evaluation the data obtained not being the same person applying the intervention and not knowing from which group the data was collected from (Kamper, 2018(a)).

Performance Bias

Similar to before, often researchers may want the study to show that the intervention is more effective, and the participants are likely to want to be in the study group that receives that intervention (Kamper, 2018(a)).

Because of this, the researchers may not deliver the two interventions with the same confidence or enthusiasm. The participants, on the other hand, may be disappointed if they’re not allocated to the group of their preference and not really put as much effort into following the instructions they are given (Kamper, 2018(a)).

This type of bias is overcome in a similar way to detection bias, ensuring that both treatments in the control and experimental group look equally beneficial, as well as not allowing the person assessing the collected outcomes to know which group they come from (Kamper, 2018(a)).

Reporting Bias/Publication Bias

In the world of research, studies that indicate an association or a positive effect are more likely to be selected for publication compared to those that show no effect or a negative one, despite the later two also adding valuable knowledge. As a consequence, this may make researchers more likely to, consciously or unconsciously, exaggerate their results or conclusions to show an effect (Bowling, 2014).

Over time this can lead to there not being accurate knowledge available on a certain topic, as in an attempt to be selected by publishers, most studies will not give us information that matches what is actually happening (Bowling, 2014).

This is a difficult bias to overcome, as that would likely mean changing the whole system through which research gets funded and selected for publication.

Selection Bias

Whenever a study is being conducted, there needs to be a number of people participating in the study. We can’t just simply test everyone in the world – it’s very impractical. So we have to select a sufficient number of people to be the sample of that study, and often we then have to again select between the people on our initial sample to divide into the different groups our study may involve, this often being the experimental group and the control group. But what happens if the researcher who selects the people and divides them between group does so based on their own preference? Because they want to study to be successful, they may only allocate to the experimental group, the people that appear more likely to benefit. Or they may select the people that appear nicer, all to be in the same group they going to assess – after all the researcher is going to have interact with them a lot, may as well make it pleasant. Sometimes, we also select people based on an unconscious judgement, so even without doing it on purpose, we could end up with study groups with considerable differences in characteristics between them. Because of this, any measurements or assessment we make, are likely to biased and not be applicable to the general population (Kamper, 2018(a)).

To reduce this type of bias, the best practice in studies is to randomize the allocation of participants between groups (Kamper, 2018(a)), with it being good practice for the authors to mention this in the text.

Quick note that this is in no way an exhaustive list, as different types of study may present different types of bias particular to their design that I have not included here.

Scientific research was developed with the aim of reducing the bias that our minds are prone to when observing and interpreting the world. However, research itself is created and applied by these same biased minds, thus leading to some bias becoming present. This doesn’t mean we can’t trust scientific research. It means that we must remain critical and be aware that theses biases exist. By doing this we understand that not all studies are equally relevant for giving us knowledge about certain topics and we can focus more on the information from the studies that are less biased (Kamper, 2018(a)).

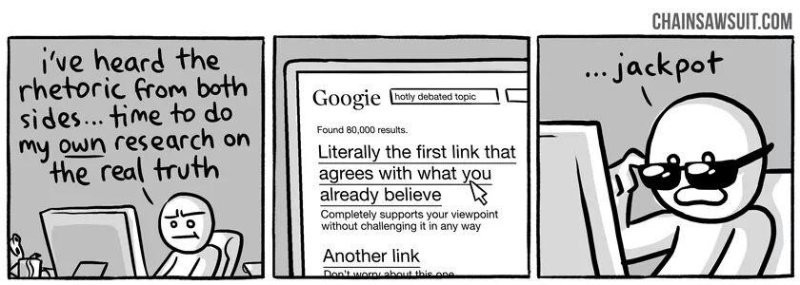

I’ve heard both colleagues and members of the general public comment that scientists keep changing their mind all the time about what is good and what is bad, particularly when it comes to health. Often this is used as a way of dismissing evidence that conflicts with their current beliefs or way of acting, but that is another conversation. To me, this view highlights that the person saying they consider the conclusions of all studies to have the same level of accuracy and importance, showing a lack of ability to critically analyse information.

This is particularly problematic in clinical practice, as evidence-based practice requires the clinician to include in their reasoning process the careful examination of the type and magnitude of biases that inevitably are present in both clinical experience and research (Kamper, 2018(b).

Nothing that is created or interpreted by humans is free of bias. But I hope this post has provided you with some help on how to work around this and improve your ability to learn.

Never stop your search for knowledge. See you in the next one.

The Physiolosopher.

References:

Bowling, A. Research Methods in Health – Investigating health in health services. 2014, fourth edition. Open University Press. Berkshire, England

Kamper, S. J. (2018 (a)). Engaging with research: Linking evidence with practice. Journal of Orthopaedic and Sports Physical Therapy, 48(6), 512–513. https://doi.org/10.2519/jospt.2018.0701

Kamper, S. J. (2018(b)). Bias: Linking evidence with practice. Journal of Orthopaedic and Sports Physical Therapy, 48(8), 667–668. https://doi.org/10.2519/jospt.2018.0703